The chip is no longer the problem

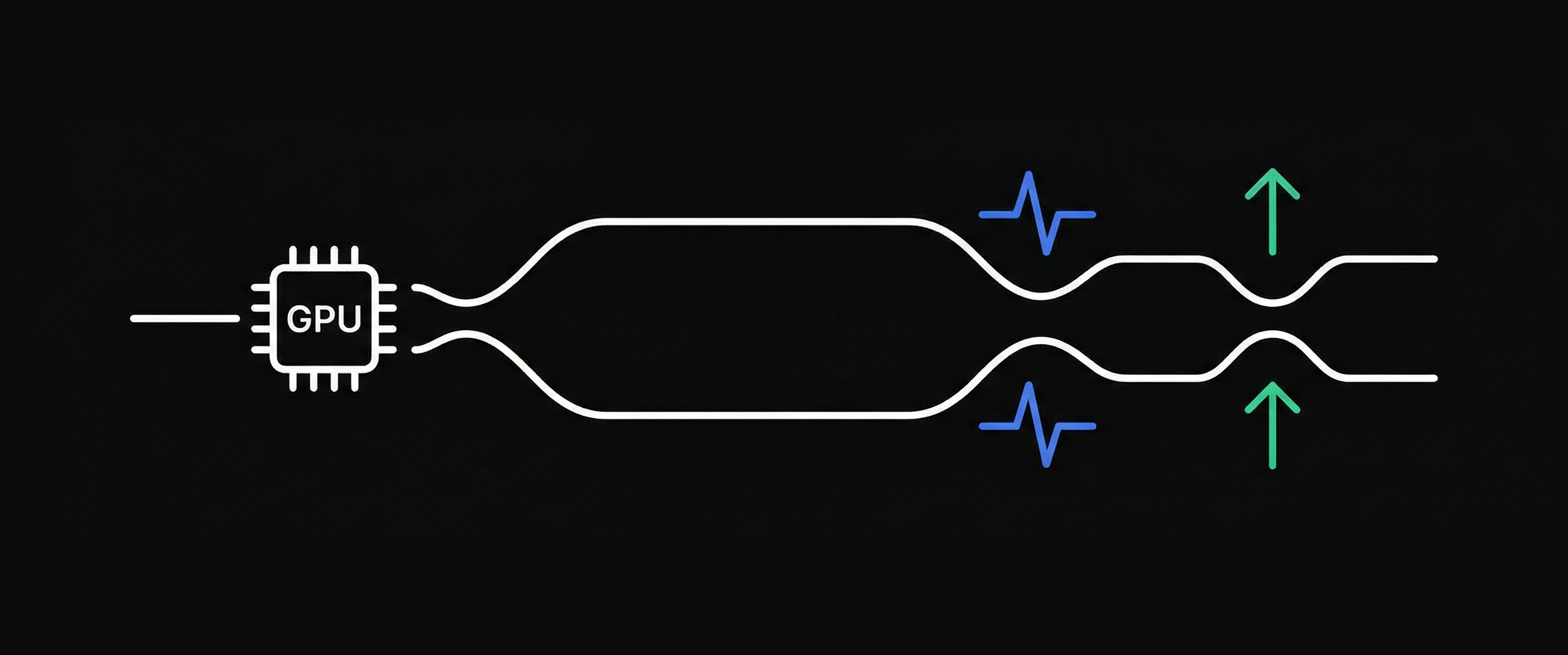

In 2024, NVIDIA has shipped hundreds of thousands of H100 GPUs. In 2025, the B200s arrived en masse. Yet, deployment times for Neo-clouds and hyperscalers aren't decreasing — they're increasing. Why?

Because the bottleneck has shifted. It is no longer in semiconductors. It is in the available electrical power, in the time it takes to connect to the grid, and in the physical capacity to dissipate the heat generated by 100 kW racks.

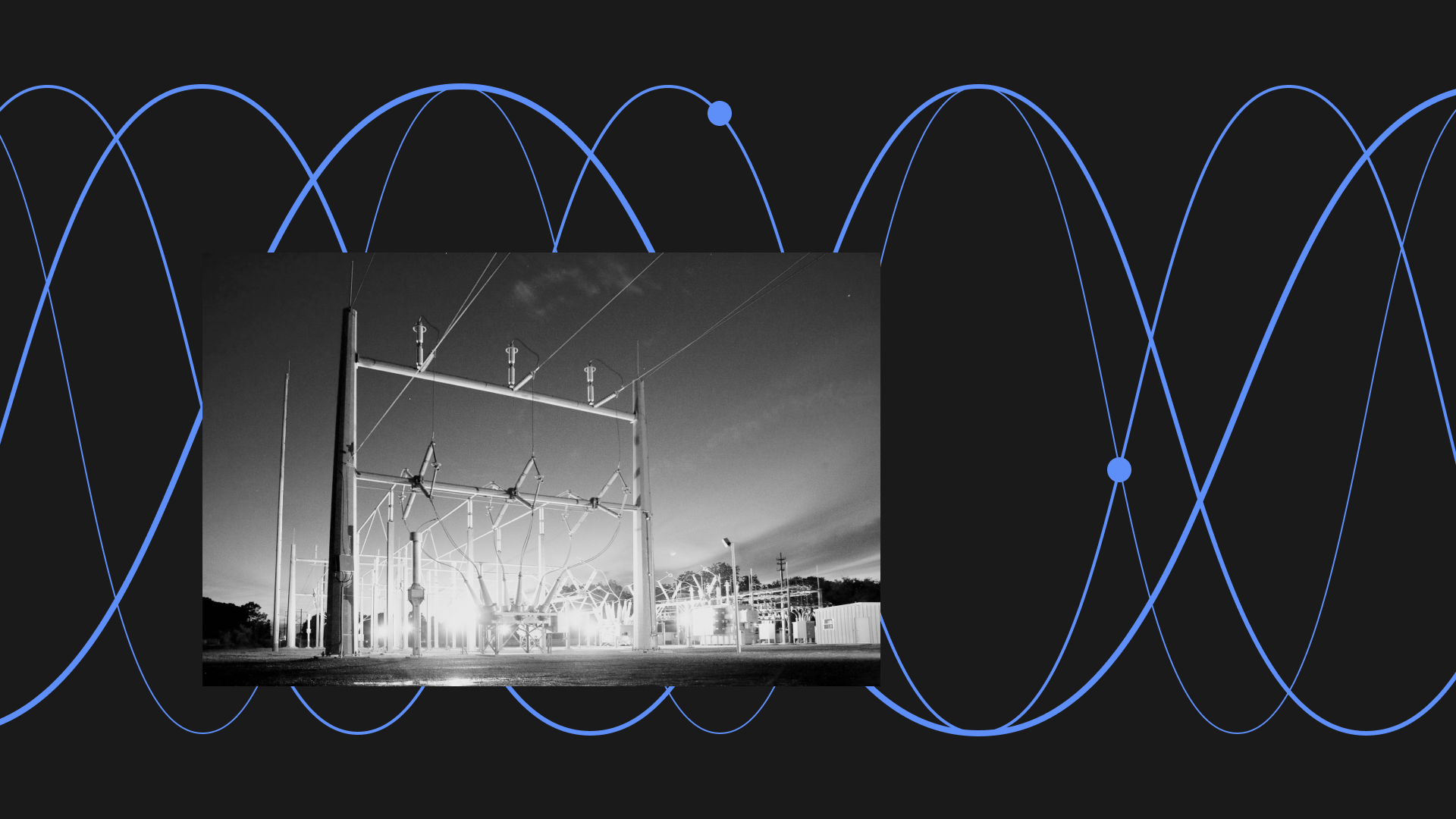

The State of the Power Grid in North America

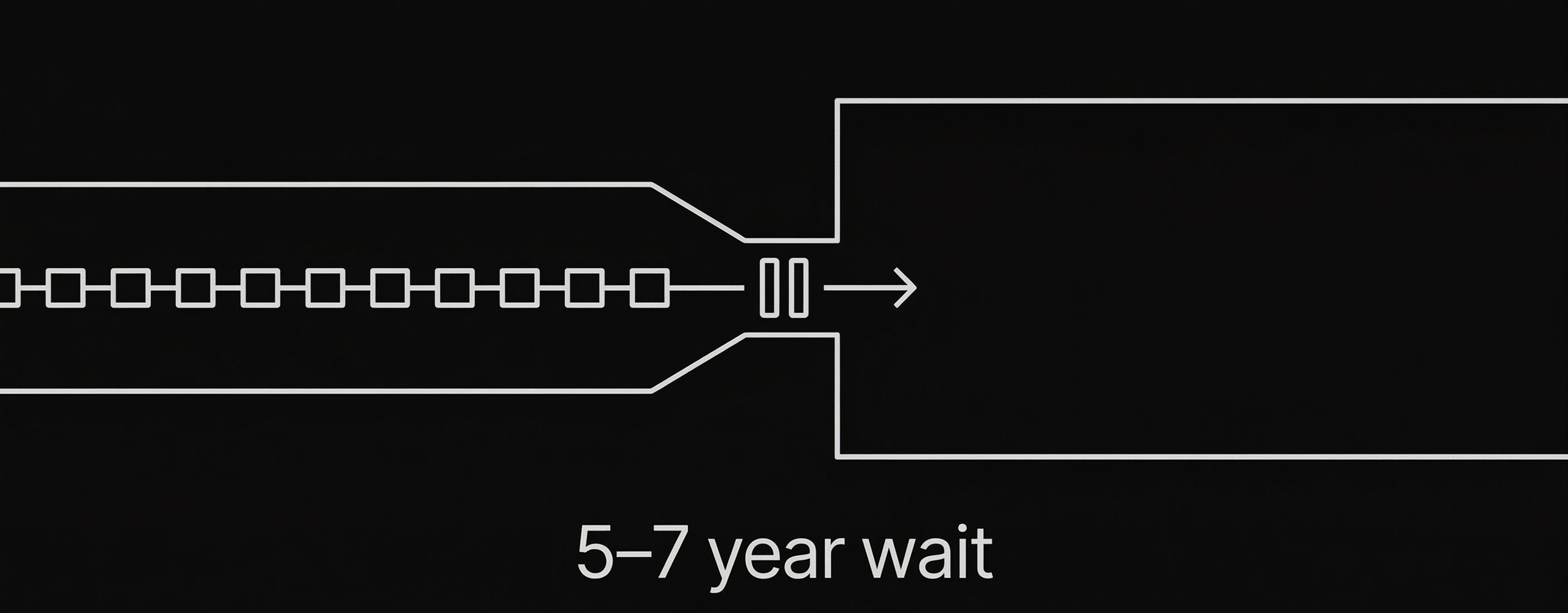

The major metropolitan areas — Northern Virginia, Phoenix, Dallas — have reached capacity. The time it takes to obtain new electrical connections is now measured in years, sometimes 5 to 7 years. For a company that needs to deploy GPU clusters in 2025 or 2026, this is prohibitive.

Quebec is a strategic exception. Hydro-Québec has a hydroelectric network with exportable capacity, almost zero carbon intensity, and industrial rates that are among the most competitive in the world.

A strategy aligned with the new constraints of the AI infrastructure

As the demand for HPC increases, power availability becomes a structuring factor for the deployment of new capacity. In this context, the question is no longer just about building more efficient data centers, but about locating them where energy is accessible, decarbonized and compatible with realistic deadlines.

Sites designed from the outset for liquid cooling respond better to this evolution than infrastructures adapted after the fact. They can support higher rack densities and better absorb the loads associated with AI and HPC.

For cloud operators, infrastructure providers and HPC players, the challenge is therefore becoming more concrete: securing usable energy capacity in environments capable of supporting the next generation of digital infrastructure.

02.png)